Hello! I need a guide on how to migrate data from shared hosting to Docker. All the guides I can find are about migrating docker containers though! I am going to use a PaaS - Caprover which sets up everything. Can I just import my data into the regular filesystem or does the containerisation have sandboxed filesystems? Thanks!

https://docs.docker.com/storage/volumes/

Just move your data and then either create bind mounts to those directories or create a new volume in docker and copy the data to the volume path in your filesystem.

I also suggest looking into podman instead of docker. Its basically a drop in replacement for docker.

Podman definitely isn’t a drop in replacement, it’s like 90% there.

whats the last 10%?

From my experience, a bit of systemd config.

Serious question: why change? Doesn’t docker do the job (isn’t it FOSS)?

I’ll consider it a drop-in replacement when Kubernetes can use it.

Not sure what you mean, Podman isn’t a container runtime and Kubernetes has deprecated it’s docker shim anyway.

Its* docker shim

lol you’re not wrong but was it worth saying?

Yes. Very much. I understand I’m being pedantic, but I don’t really do it to bash on the writer. I do it for me. It’s like an itch. I see “its” being wrongly used, and writing it the correct way is like scratching that itch.

Does it make a difference? Who knows. Some people tell me to go eat dicks, some people thank me because they either didn’t know the difference, or it was a typo (ironically, I’ve made this very mistake in the past!)

Also, I understand that languages evolve, so who knows and “it’s” instead of “its” becomes the norm. But at the moment, I find it bothersome (like “your/you’re” and “would of”)

And I also understand that we all come from a variety of backgrounds and educational skills. Some people know less stuff than I do, some people know waaaay more than I do. I personally appreciate when someone corrects me.

In the end, this is just lemmy, so I don’t take things too seriously here (in spite of this lengthy essay, lol!) This is an escape for me. If you got this far, thanks for reading.

Kubernetes uses cri-o nowadays. If you’re using kubernetes with the intent of exposing your docker sockets to your workloads, that’s just asking for all sorts of fun, hard to debug trouble. It’s best to not tie yourself to your k8s clusters underlying implementation, you just get a lot more portability since most cloud providers won’t even let you do that if you’re managed.

If you want something more akin to how kubernetes does it, there’s always nerdctl on top of the containerd interface. However nerdctl isn’t really intended to be used as anything other than a debug tool for the containerd maintainers.

Not to mention podman can just launch kubernetes workloads locally a.la. docker compose now.

Yeah I saw this post and thought “what a coincidence, I’m looking to move from docker!”

Everybody’s going somewhere, I suppose.

podman generate systemd really sold it for me. Also the auto update feature is great. No more need for watchtower.

My one… battlefield with docker was trying to have a wireguard VPN system in tandem with an adguard DNS filter and somehow not have nftables/iptables not have a raging bitch fit over it because both wireguard and docker edit your table entries in different orders and literally nothing I did made any difference to the issue, staggering wireguard’s load time, making the entries myself before docker starts (then resolvconf breaks for no reason). Oh, and they also exist on a system with a Qbittorrent container that connects to a VPN of its own before starting. Yay!

And that’s why all of that is on a raspberry pi now and will never be integrated back into the image stacks on my main server.

Just… fuck it, man. I can’t do it again. It’s too much.

Docker networking is hell

I wrote this: https://github.com/josefwells/nft_tool

Almost exactly your same situation, I got mad and took control of my firewall.

Yes, I would set up the containers empty, then import your data however the applications want it. Either by importing via their web interface, or by dropping it in their bound directory.

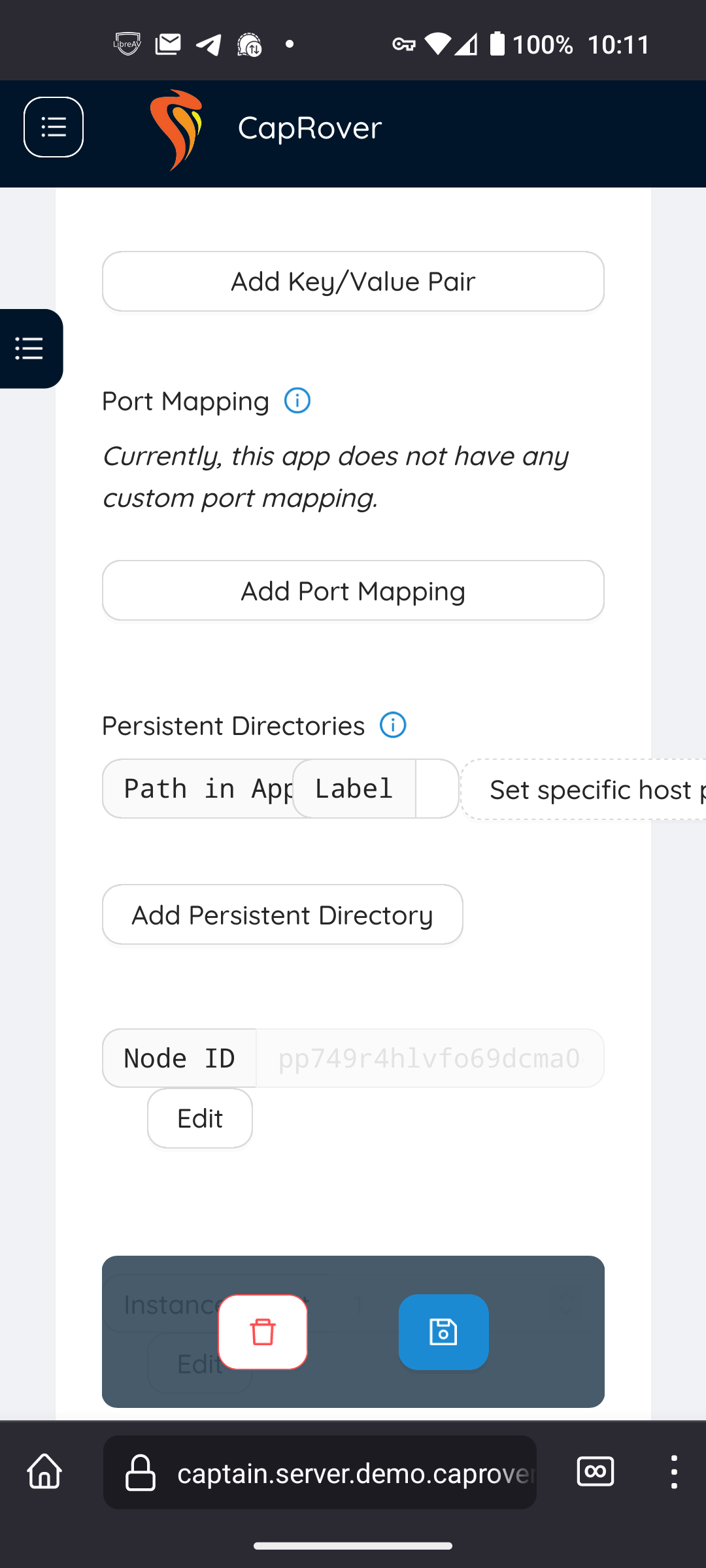

Thanks! So, here in the Capriver demo config for Wordpress path says: var/www

This is the regular var/www? Not a different one for the Wordpress container?

I would just simple put my current WP files (from public-html) in that directory?

Do the apps all share a db?

Thanks! I will have to research volumes! Bind mount - that would mean messing with fstab, yes? I set up a bind for my desktop but entering mounts in fstab has borked me more than once!

No it’s declared in the compose file or the docker run command and you specify a folder as target. No fstab needed.

I’ll try to answer the specific question here about importing data and sandboxing. You wouldn’t have to sandbox, but it’s a good idea. If we think of a Docker container as an “encapsulated version of the host”, then let’s say you have:

Service Arunning on your cloud- Requires

apt-get install -y this that and the otherto run - Uses data in

/data/my-stuff Service Brunning on your cloud- Requires

apt-get install -y other stuffto run - Uses data in

/data/my-other-stuff

In the cloud, the

Service Adata can be accessed byService B, increasing the attack vector of a leak. In Docker, you could move all your data from the cloud to your server:# On cloud cd / tar cvfz data.tgz data # On local server mkdir /local/server/ cd /local/server tar xvfz /tmp/data.tgz ./ # Now you have /local/server/data as a copyYou’re

DockerfileforService Awould be something like:FROM ubuntu RUN apt-get install -y this that and the other RUN whatever to install Service A CMD whatever to runYou’re

DockerfileforService Bwould be something like:FROM ubuntu RUN apt-get install -y other stuff RUN whatever to install Service B CMD whatever to runThis makes two unique “systems”. Now, in your

docker-compose.yml, you could have:version : '3.8' services: service-a: image: service-a volumes: - /local/server/data:/data service-b: image: service-b volumes: - /local/server/data:/dataThis would make everything look just like the cloud since

/local/server/datawould be bind mounted to/datain both containers (services). The proper way would be to isolate:version : '3.8' services: service-a: image: service-a volumes: - /local/server/data/my-stuff:/data/my-stuff service-b: image: service-b volumes: - /local/server/data/my-other-stuff:/data/my-other-stuffThis way each service only has access to the data it needs.

I hand typed this, so forgive any errors, but hope it helps.

You can copy files into the docker image via a COPY in the dockerfile or you can mount a volume to share data from the host file system into the docker container at runtime.

Acronyms, initialisms, abbreviations, contractions, and other phrases which expand to something larger, that I’ve seen in this thread:

Fewer Letters More Letters DNS Domain Name Service/System VPN Virtual Private Network k8s Kubernetes container management package

3 acronyms in this thread; the most compressed thread commented on today has 8 acronyms.

[Thread #104 for this sub, first seen 3rd Sep 2023, 01:05] [FAQ] [Full list] [Contact] [Source code]